Replay is the new debugger for agents

If your agent can’t be replayed, it can’t be trusted.

Not because the model is “unsafe.” Because production is messy:

- processes restart

- networks flake

- tools timeout

- humans approve things late

- and retries are never as clean as you think

In the last week, we’ve seen durable workflow primitives land in mainstream agent tooling — automatic checkpointing, long-running orchestrations, and dashboards for run history. That’s the real signal.

Durability is also quietly becoming governance: who approved what, what ran under which permissions, and what’s actually safe to replay under calm human oversight.

The hype version is “agents can run for days now.” The operator version is: debugging just turned into replay.

What changed and why it matters

The old mental model was: one prompt, one response. Failures were obvious: hallucination, wrong answer, wrong format.

The new mental model is: a run is a sequence. A run has:

- steps

- tool calls

- branching

- retries

- approvals

- time gaps

- and state that survives restarts

Once you persist execution, two things become true immediately:

- You now have history you can’t ignore.

- You can now re-run parts of the system — and accidentally duplicate real-world effects.

Durability doesn’t just solve uptime. It creates a new product requirement: replayability without damage.

Main argument: durable execution forces you to build a run ledger

Treating an agent like a chat thread is a category error. A chat thread is for humans. A production agent is closer to a workflow engine.

So here’s the stance:

The core artifact of a production agent is not “messages.” It’s a run ledger.

A run ledger is the thing you can hand to an operator and answer:

- what happened?

- what was attempted?

- what succeeded?

- what was retried?

- what was approved/denied?

- what was executed with which permissions?

- what is safe to replay?

If you don’t have that, you don’t have durable execution. You have durable confusion.

1) “Automatic checkpointing” is only useful if replay is deterministic

Checkpointing is the easy part. The hard part is re-running step N without corrupting the world.

That forces you to make your tool layer behave like grown-up software:

- idempotency keys for side-effecting actions (send email, create ticket, charge card)

- dedupe at the tool boundary (don’t double-create because you retried)

- exact input capture (tool args as executed, not “what we intended”)

- result capture (what the tool returned, including error payloads)

Without that, “resume” becomes “repeat but different,” and operators lose trust fast.

2) Human approvals become part of the execution graph

When agents run in real teams, the critical steps are the ones that need a human. Not because humans love clicking buttons. Because the consequences are real.

The moment you add approvals, your workflow has a new property:

- time passes

- context changes

- policies change

- the person approving may not be the person who started the run

So you need approvals to be:

- attached to a specific step in the ledger

- auditable (who approved, when, with what context)

- replay-aware (approval shouldn’t silently re-execute side effects)

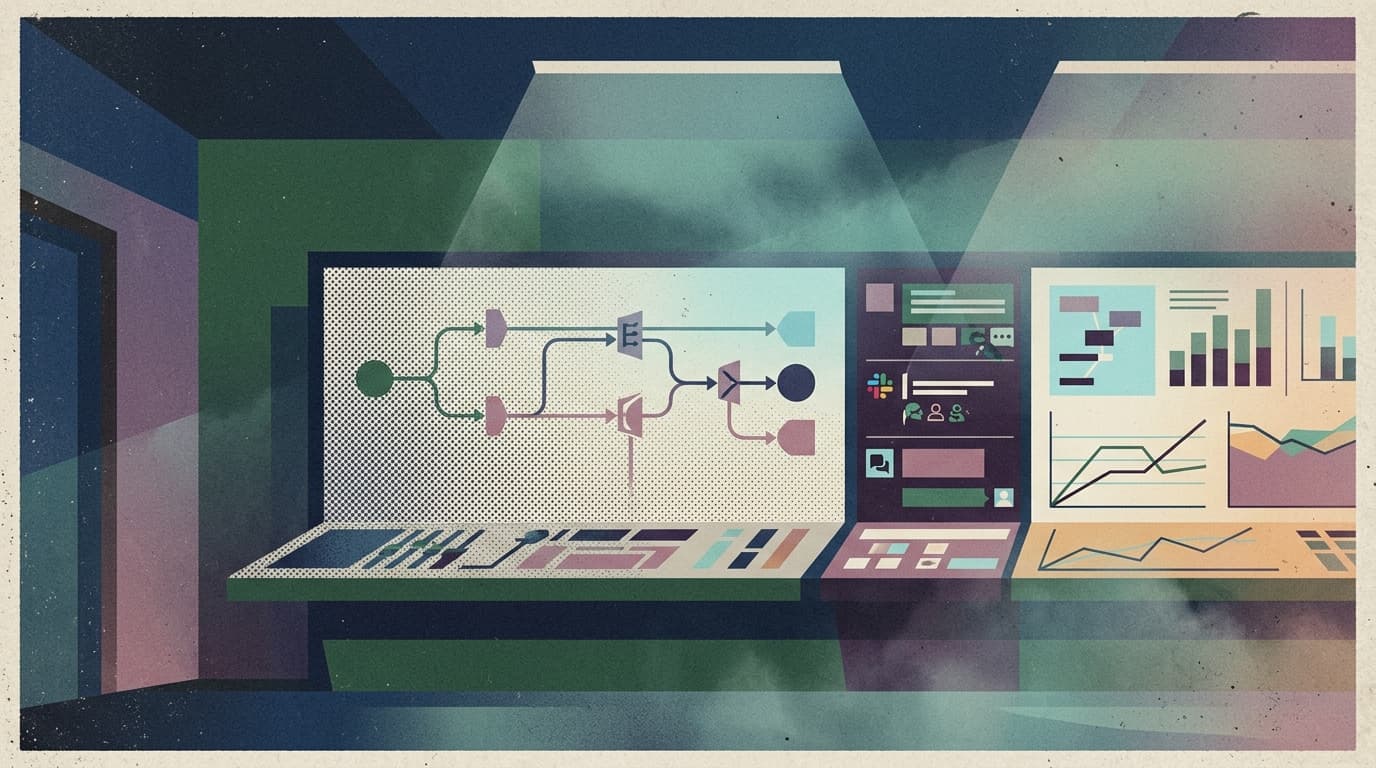

3) “Dashboard observability” isn’t a nice UI — it’s a safety mechanism

A workflow dashboard sounds like a dev convenience. In production, it’s how you avoid self-inflicted incidents.

Operators need to:

- inspect a run without reading 10,000 tokens of transcript

- jump to the exact failed step

- see the last tool payload and response

- know whether the next action is safe to retry

- and override or terminate the run cleanly

That’s not “observability.” That’s control.

Practical implications for builders/operators/teams

If you’re building agents that do real work (not demos), make these decisions explicit:

Define your unit of replay. Is it a whole run? A step? A tool call? A subgraph?

Split tools into read vs write. Reads are replay-safe. Writes need idempotency and dedupe.

Store tool contracts as artifacts. Treat tool inputs/outputs as production contracts — versioned, validated, and inspectable.

Design your state for operators, not models. Operators want “what happened” and “what’s next,” not a 200-message chat log.

Build the kill switch early. Durable systems that can’t stop are how you end up with “it’s still running… somewhere.”

Why this matters for OpenClaw users

OpenClaw-style systems already lean into tools, routing, long-running workflows, and real deployments. As durability becomes standard, the main pain shifts from “can it run?” to:

- can we replay safely?

- can we inspect what happened without archaeology?

- can we control retries, approvals, and permissions step-by-step?

That’s exactly where the “shell” matters.

OpenClaw gives you the runtime primitives. Clawpilot makes them operational:

- a place for run ledgers to live

- a Slack-native approval and control surface

- readable traces for teams (not just the original builder)

- and managed hosting so durability doesn’t turn into a DIY reliability project

Durable execution is arriving everywhere. The teams that win are the ones who treat replay as a product, not a debugging trick.

Closing

Agents are graduating from “chat with tools” to “workflows that must survive reality.”

The moment you cross that line, replay becomes the new debugger — and the run ledger becomes the thing your team actually ships.