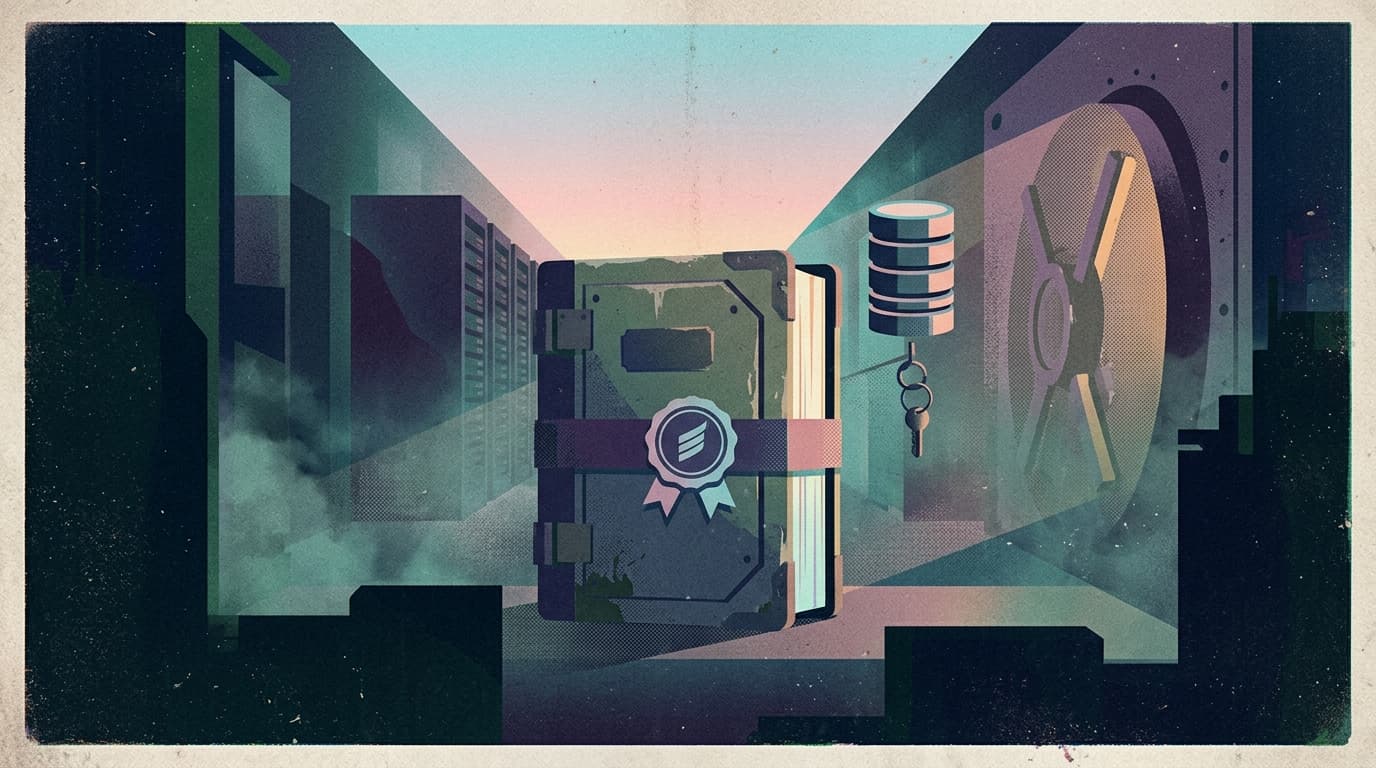

Checkpoint stores are now part of your security perimeter

A small security advisory landed this week that most teams will read the wrong way:

“Post-exploitation.” “Defense in depth.” “Only if the attacker can write to your database.”

Cool.

Now here’s the real translation for people shipping agents:

If your agent can resume from stored state, your stored state is now an execution surface.

Durable, long-running workflows are the future of production agents. And durable workflows require checkpointing. So this isn’t a LangGraph problem, or a Python problem — it’s the shape of the next incident class.

What changed and why it matters

State used to be boring:

- a conversation history,

- a task queue payload,

- a “last processed id” cursor.

Agent frameworks changed that. Checkpoints now contain rich objects, tool call plans, structured memory, and the glue that makes “pause → approve → resume” possible.

The advisory’s core idea is simple: unsafe deserialization turns “write access to state” into “code execution in the runtime.”

That should make every operator immediately ask a better question than “are we vulnerable?”

Where does our agent state live, who can write it, and what happens if it’s tampered with?

Because in the real world, “attacker can write to the backing store” is not science fiction:

- leaked database credentials,

- an overly-permissioned admin path,

- a compromised CI job that can touch prod,

- a lateral move from another service that shares the same network.

Checkpointing expands the blast radius of those mistakes.

Main argument: checkpointing is an integrity boundary, not a feature

Most teams treat checkpoints like a reliability primitive:

- retry on failure,

- resume where you left off,

- avoid re-running expensive steps.

That’s correct — and incomplete.

In production, your checkpoint store must be treated like a signed ledger of reality. If an attacker can edit it, they don’t just corrupt data. They can potentially corrupt control.

So the right mental model is:

- Your agent runtime is the CPU.

- Your checkpoint store is the disk.

- And if the disk is writable by the wrong thing, you don’t have “a bug.” You have a takeover.

Practical implications for builders, operators, and teams

1) Put checkpoints behind stricter permissions than “normal app data”

Your customer data being readable is bad. Your agent state being writable is worse.

Operationally, that means:

- separate database/user/credentials for checkpoint tables/collections,

- narrow network access (private networking, no public ingress, no shared jump boxes),

- explicit write paths (only the worker that owns the workflow gets to write state),

- aggressive credential rotation when anything looks off.

2) Default to strict deserialization, then deliberately allow what you need

If your checkpoint format can reconstruct objects, you need an allowlist. Not “we log a warning.” Not “we’ll do it later.”

Strict-by-default will break some things — good. That’s you discovering which parts of your state are dangerously magical.

3) Add tamper-evidence for state, not just audit logs for actions

Audit logs tell you what happened. Tamper-evidence tells you if the past has been rewritten.

Practical options (pick one, but pick one):

- cryptographically sign checkpoints (or checkpoint batches),

- write checkpoints append-only and versioned,

- store a hash chain so edits become detectable.

If that sounds heavy, remember: agents are becoming your automation layer. This is the automation equivalent of protecting your source of truth.

4) Design “resume” as a privileged operation

Teams build pause/resume like a UX feature. Treat it like a production switch.

- resuming from an old checkpoint should be deliberate,

- resuming across versions should be gated,

- resuming into a new tool/permission set should require approval.

Otherwise you’ll eventually ship a workflow that resumes into a different world than it was born in — and nobody notices until it hurts.

Why this matters for OpenClaw users

OpenClaw makes long-running, tool-using agents practical — which means you will eventually want:

- durable workflows,

- resumable runs,

- team handoffs,

- and persistent memory.

The checkpoint store becomes part of your runtime. So you need a real shell around OpenClaw that treats state as infrastructure:

- sane defaults for where state lives and who can write it,

- guardrails around resume/replay,

- auditability that answers “who changed what, when,”

- and an operator UI that makes “pause / approve / resume” safe for teams.

That’s the difference between “we built agents” and “we can run agents without giving security a panic attack.”

Closing takeaway

Durable execution is non-negotiable for production agents.

But the moment your agent can resume from a checkpoint, your persistence layer stops being boring. Treat it like a security boundary, or it will eventually be treated like one — by an attacker.