The Bottleneck Is Now Agent Control Surfaces, Not Model IQ

The biggest shift this week is not another "smarter model" headline.

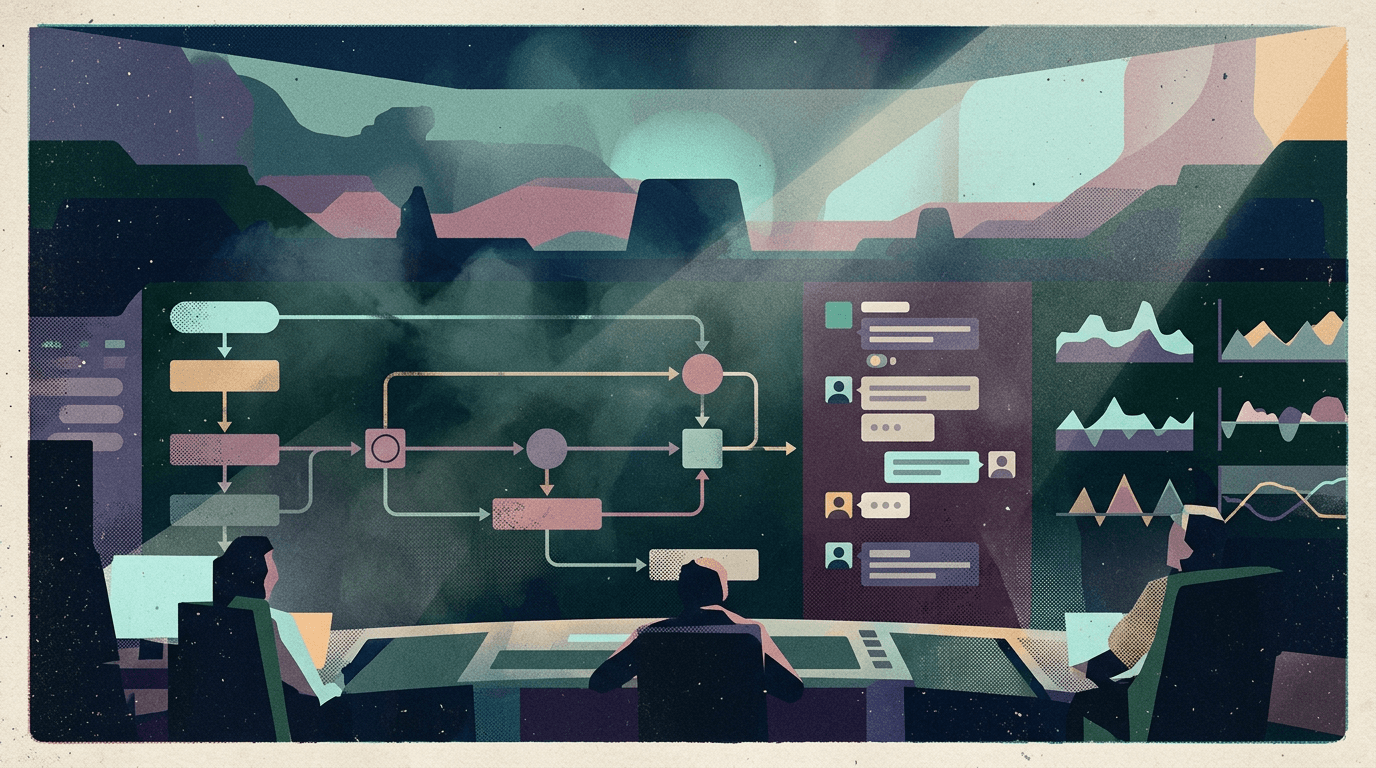

It’s that serious teams are finally treating agent control surfaces as production infrastructure: how tools are selected, how actions are reviewed, how fast mode is toggled, and how humans intervene before side effects happen.

What changed in the last 24-72h

1) OpenClaw shipped operator-facing controls, not just model tweaks

The latest OpenClaw release (Mar 13) is packed with practical operator upgrades:

- Dashboard v2 with modular views for chat, config, sessions, and agents

- shared

/fastcontrols across TUI, Control UI, and ACP - provider-plugin architecture improvements for Ollama, vLLM, and SGLang

- Slack Block Kit delivery path support

- short-lived bootstrap tokens for safer pairing

That release profile says a lot: teams need governed speed, clear visibility, and safe defaults more than they need one more benchmark spike.

2) Foundation model platforms are attacking tool-context overload

OpenAI’s agent tooling direction (Responses API + built-in tools + tracing) and Anthropic’s advanced tool-use releases (Tool Search Tool + Programmatic Tool Calling + tool examples) converge on the same problem:

too many tools and too much intermediate context break reliability before raw model intelligence does.

Anthropic’s examples are especially direct: loading entire tool libraries upfront burns context and hurts accuracy; selective tool discovery improves both context efficiency and tool-call quality.

3) Builder discussions are still pointing at tool-call failure, not reasoning novelty

Recent Reddit/operator discussion keeps repeating the same production pattern: agents sound coherent, then fail on the operational layer (wrong tool, wrong params, wrong order). Dev.to reliability writeups are similarly focusing on measurable ops metrics over "agent vibes."

Main argument: reliability is now a control-plane problem

The practical bottleneck in 2026 is this:

- model layer is getting stronger

- tool ecosystems are getting wider

- workflows are getting longer

- but operator control surfaces are often still underdesigned

When that happens, teams get the worst combo: confident outputs plus fragile execution.

The winning architecture pattern is becoming clearer:

- Deferred tool loading instead of stuffing every integration into prompt context

- Explicit fast/governed lanes so latency optimization doesn’t bypass policy

- Observable execution traces so failures are diagnosable, not mysterious

- Team-native intervention surfaces (Slack/UI) where people already work

This is less glamorous than "fully autonomous agents," but it is how systems stay alive in production.

Implications for builders and operators

- Stop scoring success by demo fluency; score it by tool-call correctness and recoverability.

- Treat

/fastor equivalent as a risk control, not just a performance switch. - Keep tool catalogs discoverable and scoped; huge always-loaded schemas are reliability debt.

- Put review and escalation in channels teams actually use (Slack, shared dashboards), not hidden logs.

- Design for "interruption + resume" as a first-class behavior in long-running workflows.

Why this matters for OpenClaw users

If you use OpenClaw today, the capability is already there: multi-tool execution, long-running sessions, routing, memory, cron, and channel-native delivery.

The gap is operational polish: making that capability consistently usable by a team, under time pressure, with sane defaults and clear controls.

That is exactly where Clawpilot fits. OpenClaw is the runtime power; Clawpilot is the shell that makes it practical to operate daily across infrastructure, app UX, and Slack-native team workflows.

Closing take

The market is moving from "can the model do this?" to "can our team run this safely every day?"

The second question is where real winners are being decided.