Critical Failure Localization Is the New Eval Baseline

Passing evals is no longer enough.

The most useful signal from the last 24–72 hours is that serious teams are shifting from “did the task succeed?” to “where did it become unrecoverable?”

That is a bigger operational change than another model leaderboard jump.

What changed this week

1) Microsoft open-sourced AgentRx around critical failure step detection

Microsoft Research introduced AgentRx as a debugging framework that identifies the first unrecoverable failure in an agent trajectory and ties it to a grounded failure taxonomy.

This matters because long, stochastic, multi-step runs break traditional pass/fail evaluation.

2) Databricks acquired Quotient AI to harden production agent evaluation loops

Databricks announced its acquisition of Quotient AI to strengthen continuous evaluation and reinforcement learning for agents in production systems.

That move is blunt market validation: evaluation is becoming a runtime feedback loop, not a pre-launch checklist.

3) OpenAI Agents SDK releases continue tightening trace-level reliability

Recent openai-agents-python releases in March include reliability-focused fixes such as tracing clarity improvements and safer behavior in multi-turn and tool-heavy flows.

When SDK release notes are dominated by trace and run-behavior fixes, the industry priority is obvious: root-cause speed beats demo polish.

4) Observability vendors are framing monitoring as a deployment gate

Monte Carlo’s March 12 launch positions agent observability across context, performance, behavior, and outputs as a prerequisite for shipping, not a nice-to-have.

Whether you buy their product or not, the framing is right: no visibility, no trust.

Main argument

The winning reliability metric in 2026 is time-to-first-critical-failure-identification.

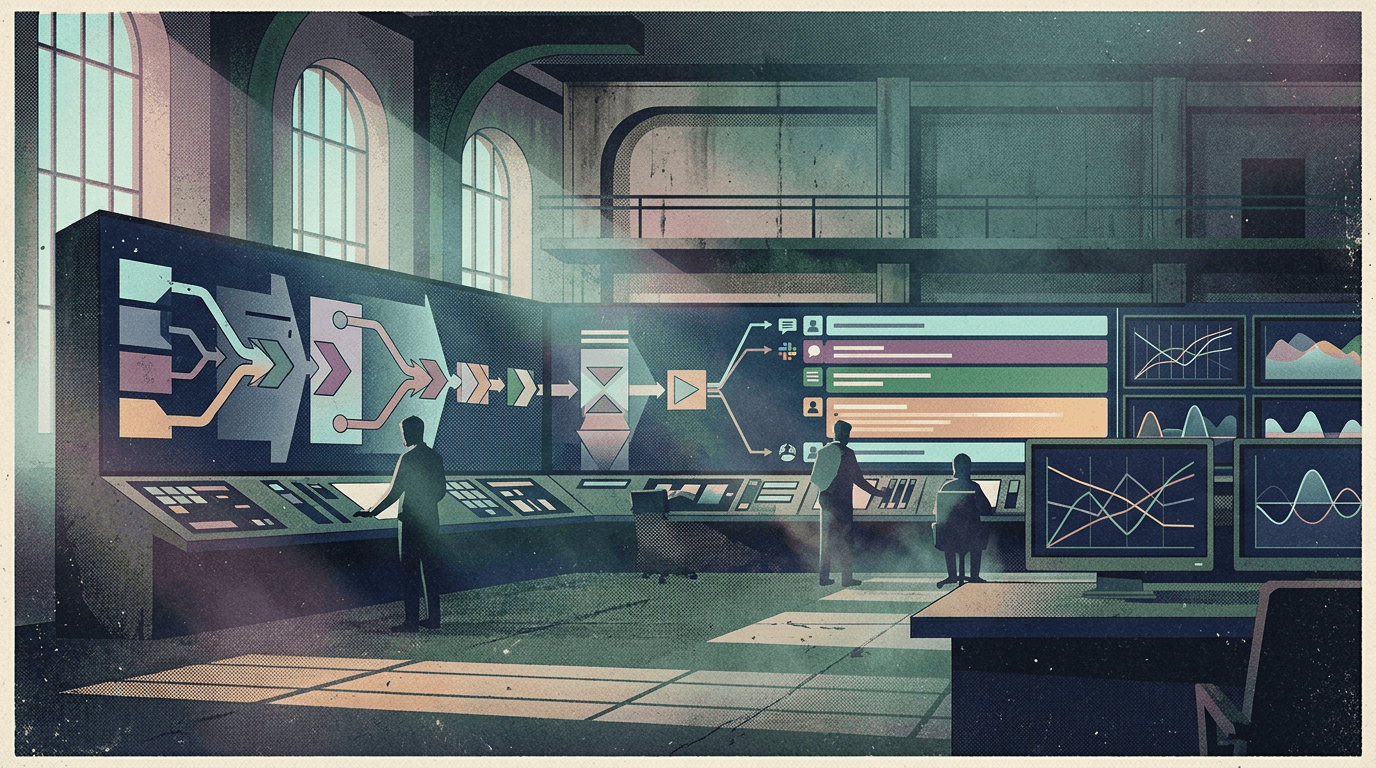

This is now a control-plane problem, not a prompt-writing problem, and it requires explicit governance and human oversight.

Not benchmark score. Not one-shot task completion.

If your team cannot pinpoint where a run stopped being recoverable, your “eval stack” is decorative.

Practical implications for builders, operators, and teams

- Log every step with enough evidence to replay decisions, not just outputs.

- Add failure taxonomy labels (tool misuse, policy breach, stale context, invalid state transition, etc.).

- Track “first unrecoverable step” per failed run, then rank recurring failure classes weekly.

- Wire pre-production evals to production traces so regressions are caught on real trajectories.

- Define intervention rules: when to auto-retry, when to route to human, when to hard-stop.

This is how teams stop arguing about whether an agent is “smart” and start making it dependable.

Why this matters for OpenClaw users

OpenClaw gives you the runtime primitives: sessions, tools, memory, routing, and long-running workflows.

But production reliability is not just having primitives. It is having an operator shell that makes those primitives inspectable, steerable, and team-usable under pressure.

That is exactly where Clawpilot fits:

- OpenClaw runs the agent runtime and orchestration substrate.

- Clawpilot provides the practical operating layer for teams (hosting, UX, and Slack-native control).

If the new market baseline is failure localization speed, the shell around the runtime becomes strategic, not optional.

Closing

The next generation of agent teams will not be the ones with the fanciest autonomy demos.

They will be the teams that can answer one question in minutes, not hours: where did this run first become unrecoverable, and who owns the fix?