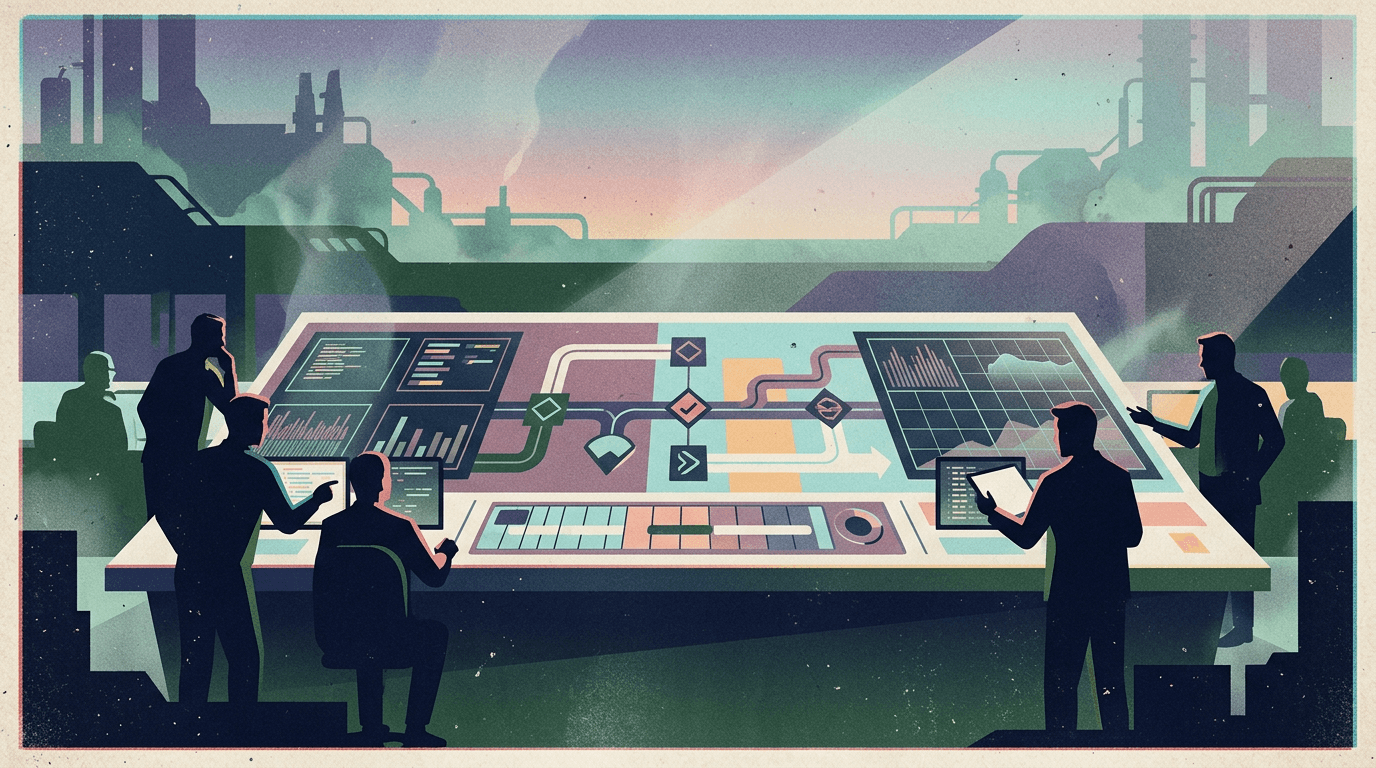

Context Engineering Is the Real Job Now

The era of "just write a better prompt" is over.

Anthropic published a detailed engineering guide this week titled Effective Context Engineering for AI Agents. It's not a blog post full of vibes — it's a formal articulation of a discipline shift that production teams have been living through for months:

The job is no longer prompt engineering. It's context engineering.

What changed, and why it matters

Anthropic's post defines context engineering as "the art and science of curating what will go into the limited context window from a constantly evolving universe of possible information."

That's a precise sentence, and it changes the conversation. A few highlights worth noting:

1) Context rot is real and universal

Anthropic introduces the concept of context rot — as context window size grows, a model's ability to accurately recall information decreases. This isn't a bug in one model. It's an architectural property of transformers: every token attends to every other token (n² relationships), so adding more tokens doesn't just add information — it dilutes attention across everything already there.

This means bigger context windows are not the answer. Smarter curation is.

2) The "attention budget" model

The post frames context as a finite resource with diminishing marginal returns. Every token you add costs attention. System prompts, tool descriptions, retrieved documents, conversation history, memory files — they all compete for the same budget.

The practical implication: agents don't fail because they lack information. They fail because they have too much noise and not enough signal in their context. The operators who understand this treat context curation as their most important control surface.

3) Sub-agent isolation is a context engineering pattern

Anthropic explicitly calls out sub-agent architectures as a core context engineering technique. By spawning focused agents with narrow context windows — each working on a specific subtask — you inherently manage context pollution. The sub-agent runs clean. It returns a distilled summary. The parent agent's context stays focused.

This is not a "multi-agent" buzzword play. It's an architectural decision to preserve attention budget.

Why this framing matters for builders

If context engineering is the real job, most teams are still solving the wrong problem. They're spending time on:

- Better prompts (marginal gain)

- Longer context windows (counterproductive)

- More tools (noise injection)

Instead of:

- Isolating subtasks into sub-agents with clean context windows

- Persisting memory externally (files, databases) and retrieving only what's relevant per turn

- Designing context layering — system, task, tool, memory — as distinct architectural components

- Compacting aggressively — summarizing and discarding instead of accumulating

The teams shipping reliable agents in production have already figured this out. They just haven't had the vocabulary for it until now.

The OpenClaw connection

This is where OpenClaw's architecture becomes interesting from a context engineering perspective.

OpenClaw's session system — sessions_spawn for isolated sub-agents, memory files (MEMORY.md, daily notes), session persistence — is already a context engineering system. The design choices reflect exactly what Anthropic is describing:

- Sub-agent isolation:

sessions_spawncreates agents with their own context windows, task scope, and lifecycle. The parent session stays clean. The sub-agent runs focused. This is context engineering by architecture. - External memory: Workspace files (MEMORY.md,

memory/*.md, TOOLS.md) act as persistent context layers that are loaded deliberately — not dumped into every turn. The agent reads what's relevant and ignores the rest. - Session-scoped context: Each session has its own message history, its own tool state, its own context budget. Cross-session pollution doesn't happen because sessions are architecturally isolated.

- Compaction via summaries: When sub-agents complete, their results come back as distilled messages, not raw conversation dumps. The parent agent gets signal, not noise.

These aren't accidental features. They're context engineering patterns that were baked into the runtime before the industry had a name for it.

Why this matters for OpenClaw users

If context engineering is the discipline that separates toy demos from production agents, then OpenClaw's architecture is already ahead of the curve. But architecture alone isn't enough — you need to operate it well.

That's where the shell matters. Clawpilot exists to make OpenClaw's context engineering architecture usable by teams: structured workflows, clear session management, memory that actually gets maintained, observability into what's happening in each agent's context, and reliable human oversight at every decision point.

The teams that win with agents won't be the ones with the smartest models or the cleverest prompts. They'll be the ones who engineer context deliberately — and run it on infrastructure that makes that discipline practical.

Context engineering is the real job now. The question is whether your stack supports it.