Agent Communication Is Solved — Agent Memory Is Not

The agent infrastructure layer just hit an inflection point. Google's A2A protocol crossed 100 technology partners. MCP's Python and TypeScript SDKs recorded 97 million monthly downloads. Confluent announced A2A support this month. NIST opened its Agent Security RFI with a March 2026 deadline.

Agent-to-agent communication is becoming a solved problem. The protocols work. The partnerships exist. The plumbing is ready.

The production bottleneck has already moved.

The real problem is memory, not messaging

When teams deploy multi-agent workflows today, they discover a brutal asymmetry: getting agents to talk to each other is easy. Getting them to remember what they said — and what it meant — is hard.

This isn't a theoretical concern. It's showing up in three concrete failure modes:

1. Architectural amnesia

Agents repeatedly resolve the same problem, forget previous decisions, and generate conflicting outputs within the same workflow. A MongoDB engineering post this week described it bluntly: without explicit memory engineering, multi-agent systems exhibit "architectural amnesia" — they can coordinate in the moment but have no durable recall of why they made earlier choices.

The cost is both silent and expensive. Duplicate tool calls. Repeated API calls. Inconsistent handoffs that look correct in the moment but accumulate drift over hours.

2. The context handoff gap

A Galileo AI analysis this month documented the "Goldilocks problem" in agent handoffs. Too much context overwhelms downstream agents and burns tokens. Too little context produces "lossy compression" — agents missing critical details and taking wrong actions.

There is no standard protocol for how much context one agent should pass to another. A2A defines the transport. MCP defines the tool interface. Neither defines the memory contract between agents.

3. Token cost explosion

Multi-agent systems can burn up to 15x more tokens than single-agent workflows. The dominant cost isn't inference — it's context sharing. Every agent-to-agent handoff requires re-establishing state, re-sending relevant memory, and re-validating assumptions. Without scoped memory, teams pay the full context tax on every interaction.

What actually works: memory-first multi-agent design

The teams shipping reliable multi-agent workflows in March 2026 share a pattern: they designed memory before they designed coordination.

Three practical moves:

1. Session-scoped memory with explicit handoff contracts. Instead of broadcasting full context between agents, define a handoff schema: what state is required, what is optional, what must never leak. This is the "context contract" idea applied at the inter-agent boundary.

2. Shared memory with role-aware access control. Not every agent should read every memory layer. A research agent doesn't need billing state. A support agent doesn't need code deployment history. Role-aware memory scoping reduces token spend and prevents accidental context contamination.

3. Observable memory writes. Log every memory write and retrieval. If you cannot explain why an agent remembered something, you cannot debug why it acted on it. This is the multi-agent equivalent of structured logging — boring, essential, and almost universally skipped.

The framework landscape is catching up

The tooling is converging on these patterns, but most teams still assemble it manually:

- LangGraph offers explicit state management with checkpointing, letting teams define memory boundaries between agent nodes.

- CrewAI models agents as crew members with role-scoped memory (ChromaDB + SQLite), keeping context tied to function.

- Mem0 provides a dedicated multi-level memory layer (user, session, agent) with vector search and metadata filtering.

- Google Vertex AI Agent Builder routes hot memory through external services like Redis for latency control.

- Redis has positioned itself as the shared-state backbone for production multi-agent systems, offering fast reads with consistency guarantees.

The pattern is clear: memory is becoming an infrastructure concern, not a prompt-engineering afterthought.

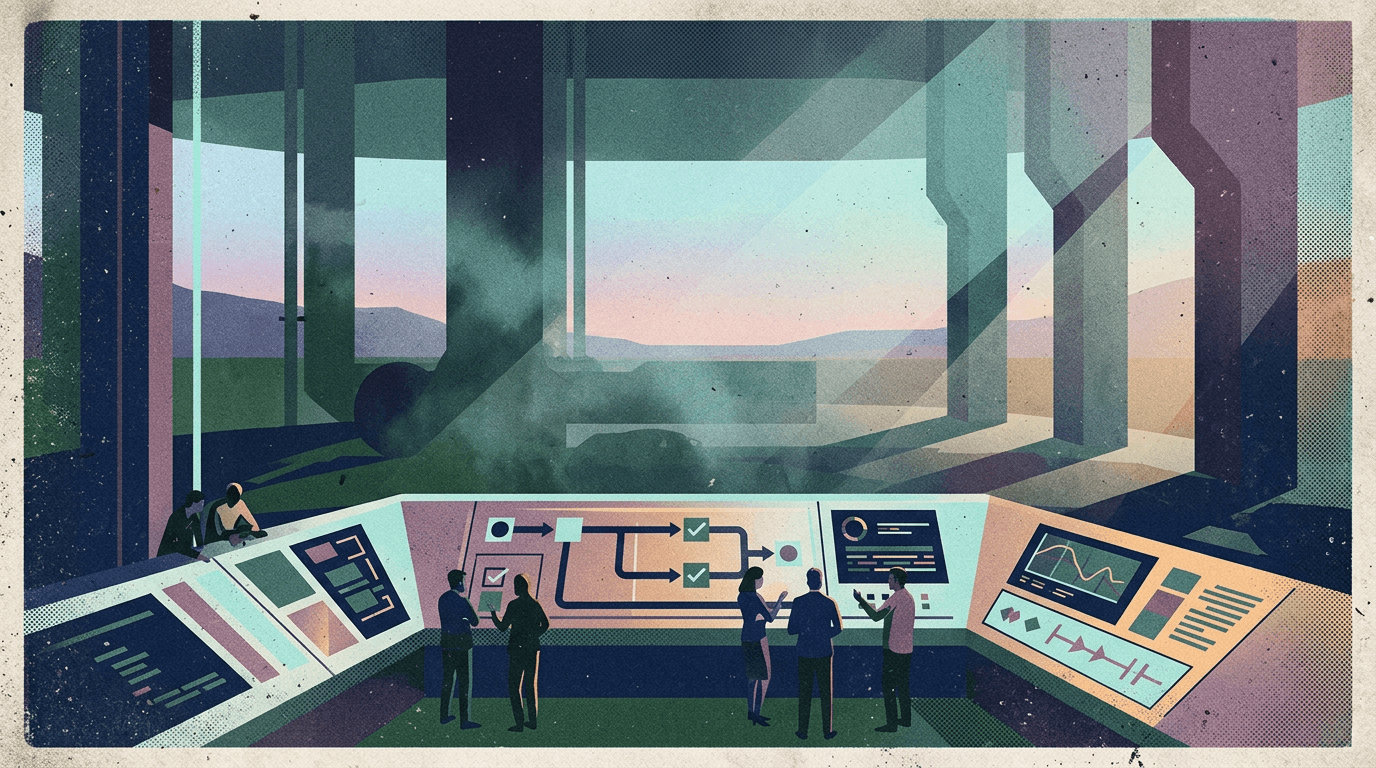

Why this matters for OpenClaw users

OpenClaw gives you the runtime — sessions, tools, model routing, automation triggers. It already supports the primitives for memory-aware agent workflows: session persistence, scoped tool access, and structured handoffs between runs.

But the operational complexity of multi-agent memory lives in the shell around the runtime:

- Clawpilot provides the shared control surface where teams can see what each agent remembered, what it passed on, and where memory diverged.

- Session governance means handoffs between agents are traceable, not mysterious.

- Operator-facing controls let teams adjust memory scope, set token budgets per workflow, and audit memory writes without touching runtime code.

The A2A and MCP protocols handle how agents talk. OpenClaw handles what agents can do. Clawpilot handles what agents remember and who can see it.

The last layer is where production reliability lives — and where governed handoffs between agents become traceable instead of mysterious. Human oversight at the memory boundary is what separates a demo from a deployable system in March 2026.

Closing

Agent communication protocols solved the easy part. The hard part — getting multi-agent systems to remember coherently, hand off context correctly, and stay within cost budgets — is now the primary production challenge.

Teams that treat memory as infrastructure (scoped, observable, role-aware) will ship reliable multi-agent workflows. Teams that treat it as a prompt problem will keep burning tokens and debugging phantom failures.

The protocol wars are wrapping up. The memory wars are just starting.