Context Contracts Beat Bigger Frameworks

The loudest narrative in agent land is still "more autonomous, more multi-agent, more framework."

The real signal this week is the opposite: teams are tightening context boundaries and execution contracts so agents stop breaking in production.

If you operate agent workflows for a real team, this matters more than model benchmarks.

What the last 24-72h signal is saying

Across X/LinkedIn/Reddit threads and long-form posts this week, the recurring pattern is consistent:

- Reliability problems are still UI fragility, hidden assumptions, and missing observability.

- Lightweight loops are winning over giant orchestration stacks for many practical use cases.

- "Context" is becoming an operational object (owned, versioned, auditable), not a fuzzy prompt concept.

That same shape is visible in product and release activity.

Three concrete proofs

1) Production UI automation is now sold as a reliability problem, not an intelligence problem

A new Dev.to breakdown on Amazon Nova Act focuses directly on production failure modes: changing UI selectors, flakiness, and the need for observability + repeatability in browser agents.

That framing is the key shift. Teams are buying stability contracts, not flashy one-shot demos.

2) Builders are pushing back on heavyweight agent stacks

Another widely shared Dev.to post on Karpathy’s autoresearch argues for minimal closed loops (change → run → evaluate → iterate) over giant frameworks.

That is exactly how operator teams de-risk rollout:

- keep scope narrow,

- expose measurable outcomes,

- avoid architecture debt before value exists.

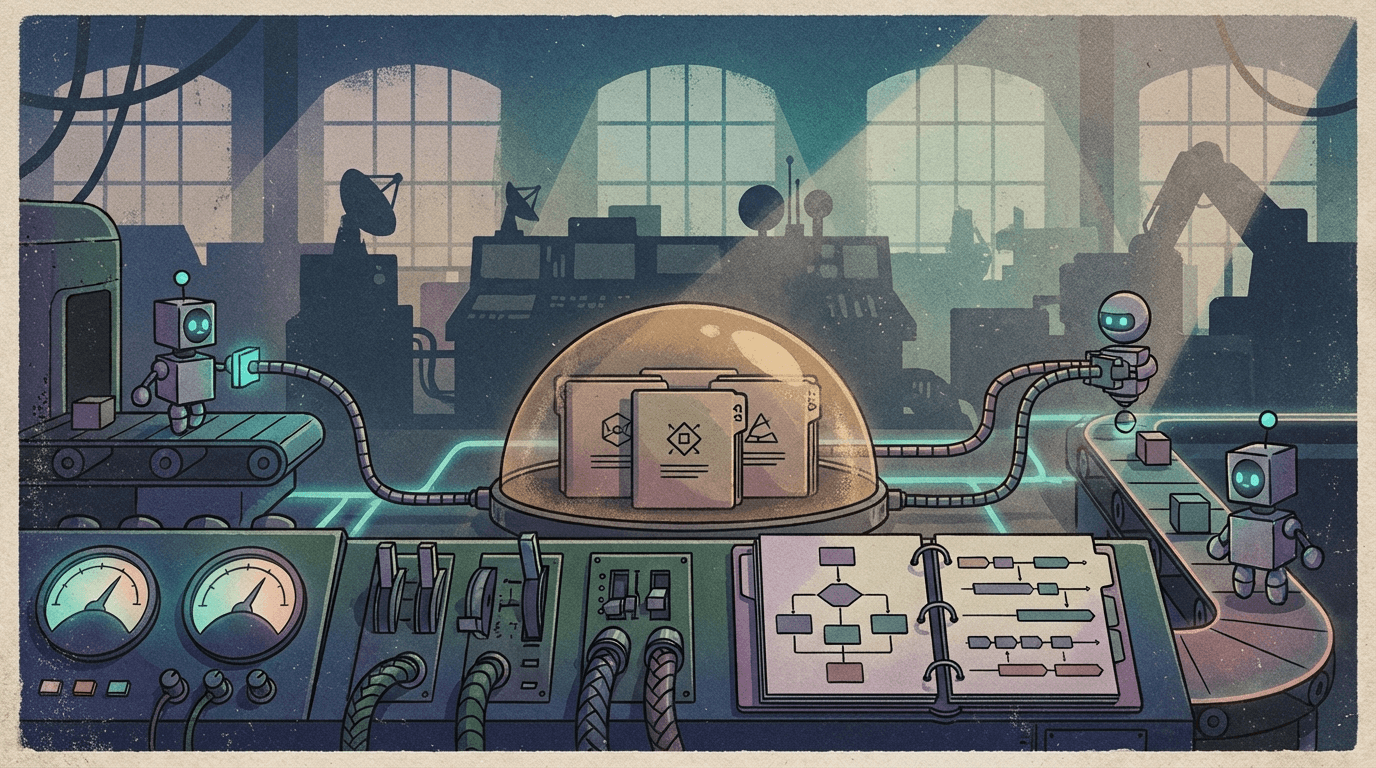

3) Context ownership is becoming a first-class governance issue

A new Medium piece from Data Science Collective frames the core conflict clearly: technical context, business context, and decision context are different, and agents fail when organizations treat them as one blob.

I agree with this take. If nobody owns context, nobody owns agent mistakes.

Why this is strategically relevant for Clawpilot users

Clawpilot users are operators and builders, not toy-demo tourists.

Your failure mode is rarely "model too weak." It is usually one of these:

- workflow state is implicit,

- memory scope is unclear,

- tool permissions drift,

- handoffs between sessions are non-deterministic.

So the winning pattern is:

- Define context inputs per workflow (what is allowed, what is authoritative).

- Track execution state explicitly (session, tool calls, retries, outcomes).

- Add quality gates before high-impact actions.

- Keep each agent loop small enough to debug fast.

In plain terms: boring contracts beat magical prompts.

What to do this week (operator checklist)

If you run OpenClaw/Clawpilot workflows in production, do this now:

- Pick one workflow and write a one-page context contract:

- required inputs,

- allowed tools,

- success/failure criteria,

- escalation path.

- Add a pre-action verification step for any outbound or irreversible action.

- Add a post-run summary artifact (what was read, decided, sent, changed).

- Kill one "framework layer" that exists only to feel sophisticated.

OpenClaw angle: the platform is already moving this direction

Recent OpenClaw release activity also aligns with this trend:

- security hardening at the gateway/browser boundary,

- expanded memory/search capabilities,

- stronger onboarding for local+cloud operating modes,

- better session/model control surfaces.

That is not random feature churn. It is infrastructure for context contracts.

Final take

2026’s useful agent stack will not be the one with the most orchestration diagrams.

It will be the one where context is explicit, ownership is clear, and every critical action is observable.

For teams shipping with Clawpilot, that is the shortest path from "impressive" to "dependable."